Teachable Machine 2.0 makes AI easier for everyone

People are using AI to explore all kinds of ideas—identifying the roots of bad traffic in Los Angeles, improving recycling rates in Singapore, and even experimenting with dance. Getting started with your own machine learning projects might seem intimidating, but Teachable Machine is a web-based tool that makes it fast, easy, and accessible to everyone.

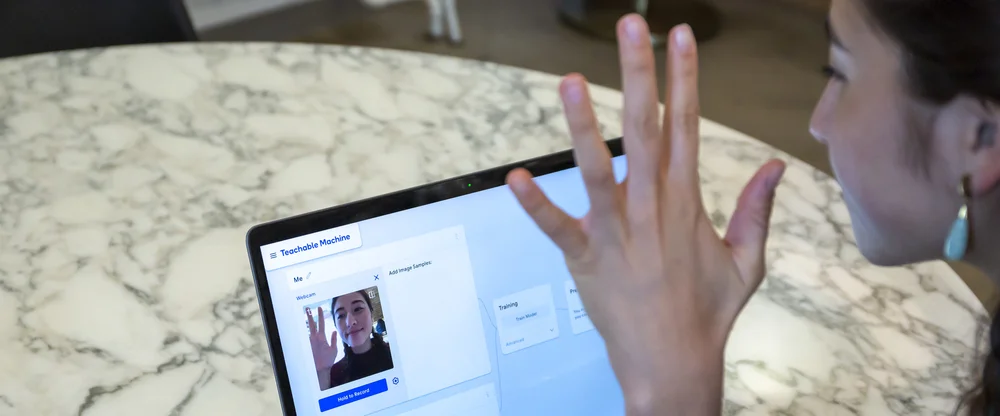

The first version of Teachable Machine let anyone teach their computer to recognize images using a webcam. For a lot of people, it was their first time experiencing what it’s like to train their own machine learning model: teaching the computer how to recognize patterns in data (images, in this case) and assign new data to categories.

Since then, we’ve heard from lots of people who want to take their Teachable Machine models one step further and use them in their own projects. Teachable Machine 2.0 lets you train your own machine learning model with the click of a button, no coding required, and export it to websites, apps, physical machines and more. Teachable Machine 2.0 can also recognize sounds and poses, like whether you're standing or sitting down.

We collaborated with educators, artists, students and makers of all kinds to figure out how to make the tool useful for them. For example, education researcher Blakeley H. Payne and her teammates have been using Teachable Machine as part of open-source curriculum that teaches middle-schoolers about AI through a hands on learning experience.

“Parents—especially of girls—often tell me their child is nervous to learn about AI because they have never coded before,” Blakeley said. “I love using Teachable Machine in the classroom because it empowers these students to be designers of technology without the fear of ‘I've never done this before.’”

But it’s not just for teaching. Steve Saling is an accessibility technology expert who used it to explore improve communication for people with impaired speech. Yining Shi has been using Teachable Machine with her students in the Interactive Telecommunications Program at NYU to explore its potential for game design. And at Google, we’ve been using it make physical sorting machines easier for anyone to build. Here’s how it all works:

Gather examples

You can use Teachable Machine to recognize images, sounds or poses. Upload your own image files, or capture them live with a mic or webcam. These examples stay on-device, never leaving your computer unless you choose to save your project to Google Drive.

Gathering image examples.

Train your model

With the click of a button, Teachable Machine will train a model based on the examples you provided. All the training happens in your browser, so everything stays in your computer.

Training a model with the click of a button.

Test and tweak

Play with your model on the site to see how it performs. Not to your liking? Tweak the examples and see how it does.

Testing out the model instantly using a webcam.

Use your model

The model you created is powered by Tensorflow.js, an open-source library for machine learning from Google. You can export it to use in websites, apps, and more. You can also save your project to Google Drive so you can pick up where you left off.

Ready to dive in? Here’s some helpful links and inspiration:

- Instructional video: Getting Started with Teachable Machine by The Coding train

- Beginner-friendly tutorials: Recognize fruit, poses, or sounds you make

- Lesson plans: MIT AI Ethics Education Curriculum by Blakeley H. Payne (Personal Robots group, MIT Media Lab), Teachable Arcade Lesson Plan by Ryan Mather

- Physical computing projects: Tiny Sorter (Arduino-based), and Teachable Sorter (Coral-Based)

Drop us a line with your thoughts and ideas, and post what you make, or follow along with #teachablemachine. We can’t wait to see what you create. Try it out at g.co/teachablemachine.