How Google autocomplete works in Search

Autocomplete is a feature within Google Search designed to make it faster to complete searches that you’re beginning to type. In this post—the second in a series that goes behind-the-scenes about Google Search—we’ll explore when, where and how autocomplete works.

Using autocomplete

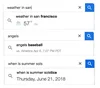

Autocomplete is available most anywhere you find a Google search box, including the Google home page, the Google app for iOS and Android, the quick search box from within Android and the “Omnibox” address bar within Chrome. Just begin typing, and you’ll see predictions appear:

In the example above, you can see that typing the letters “san f” brings up predictions such as “san francisco weather” or “san fernando mission,” making it easy to finish entering your search on these topics without typing all the letters.

Sometimes, we’ll also help you complete individual words and phrases, as you type:

Autocomplete is especially useful for those using mobile devices, making it easy to complete a search on a small screen where typing can be hard. For both mobile and desktop users, it’s a huge time saver all around. How much? Well:

- On average, it reduces typing by about 25 percent

- Cumulatively, we estimate it saves over 200 years of typing time per day. Yes, per day!

Predictions, not suggestions

You’ll notice we call these autocomplete “predictions” rather than “suggestions,” and there’s a good reason for that. Autocomplete is designed to help people complete a search they were intending to do, not to suggest new types of searches to be performed. These are our best predictions of the query you were likely to continue entering.How do we determine these predictions? We look at the real searches that happen on Google and show common and trending ones relevant to the characters that are entered and also related to your location and previous searches.

The predictions change in response to new characters being entered into the search box. For example, going from “san f” to “san fe” causes the San Francisco-related predictions shown above to disappear, with those relating to San Fernando then appearing at the top of the list:

That makes sense. It becomes clear from the additional letter that someone isn’t doing a search that would relate to San Francisco, so the predictions change to something more relevant.

Why some predictions are removed

The predictions we show are common and trending ones related to what someone begins to type. However, Google removes predictions that are against our autocomplete policies, which bar:- Sexually explicit predictions that are not related to medical, scientific, or sex education topics

- Hateful predictions against groups and individuals on the basis of race, religion or several other demographics

- Violent predictions

- Dangerous and harmful activity in predictions

In addition to these policies, we may remove predictions that we determine to be spam, that are closely associated with piracy, or in response to valid legal requests.

A guiding principle here is that autocomplete should not shock users with unexpected or unwanted predictions.

This principle and our autocomplete policies are also why popular searches as measured in our Google Trends tool might not appear as predictions within autocomplete. Google Trends is designed as a way for anyone to deliberately research the popularity of search topics over time. Autocomplete removal policies are not used for Google Trends.

Why inappropriate predictions happen

We have systems in place designed to automatically catch inappropriate predictions and not show them. However, we process billions of searches per day, which in turn means we show many billions of predictions each day. Our systems aren’t perfect, and inappropriate predictions can get through. When we’re alerted to these, we strive to quickly remove them.It’s worth noting that while some predictions may seem odd, shocking or cause a “Who would search for that!” reaction, looking at the actual search results they generate sometimes provides needed context. As we explained earlier this year, the search results themselves may make it clearer in some cases that predictions don’t necessarily reflect awful opinions that some may hold but instead may come from those seeking specific content that’s not problematic. It’s also important to note that predictions aren’t search results and don’t limit what you can search for.

Regardless, even if the context behind a prediction is good, even if a prediction is infrequent, it’s still an issue if the prediction is inappropriate. It’s our job to reduce these as much as possible.

Our latest efforts against inappropriate predictions

To better deal with inappropriate predictions, we launched a feedback tool last year and have been using the data since to make improvements to our systems. In the coming weeks, expanded criteria applying to hate and violence will be in force for policy removals.Our existing policy protecting groups and individuals against hateful predictions only covers cases involving race, ethnic origin, religion, disability, gender, age, nationality, veteran status, sexual orientation or gender identity. Our expanded policy for search will cover any case where predictions are reasonably perceived as hateful or prejudiced toward individuals and groups, without particular demographics.

With the greater protections for individuals and groups, there may be exceptions where compelling public interest allows for a prediction to be retained. With groups, predictions might also be retained if there’s clear “attribution of source” indicated. For example, predictions for song lyrics or book titles that might be sensitive may appear, but only when combined with words like “lyrics” or “book” or other cues that indicate a specific work is being sought.

As for violence, our policy will expand to cover removal of predictions which seem to advocate, glorify or trivialize violence and atrocities, or which disparage victims.

How to report inappropriate predictions

Our expanded policies will roll out in the coming weeks. We hope that the new policies, along with other efforts with our systems, will improve autocomplete overall. But with billions of predictions happening each day, we know that we won’t catch everything that’s inappropriate.Should you spot something, you can report using the “Report inappropriate predictions” link we launched last year, which appears below the search box on desktop:

For those on mobile or using the Google app for Android, long press on a prediction to get a reporting option. Those using the Google app on iOS can swipe to the left to get the reporting option.

By the way, if we take action on a reported prediction that violates our policies, we don’t just remove that particular prediction. We expand to ensure we’re also dealing with closely related predictions. Doing this work means sometimes an inappropriate prediction might not immediately disappear, but spending a little extra time means we can provide a broader solution.

Making predictions richer and more useful

As said above, our predictions show in search boxes that range from desktop to mobile to within our Google app. The appearance, order and some of the predictions themselves can vary along with this.When you’re using Google on desktop, you’ll typically see up to 10 predictions. On a mobile device, you’ll typically see up to five, as there’s less screen space.

On mobile or Chrome on desktop, we may show you information like dates, the local weather, sports information and more below a prediction:

In the Google app, you may also notice that some of the predictions have little logos or images next to them. That’s a sign that we have special Knowledge Graph information about that topic, structured information that’s often especially useful to mobile searchers:

Predictions also will vary because the list may include any related past searches you’ve done. We show these to help you quickly get back to a previous search you may have conducted:

You can tell if a past search is appearing because on desktop, you’ll see the word “Remove” appear next to a prediction. Click on that word if you want to delete the past search.

On mobile, you’ll see a clock icon on the left and an X button on the right. Click on the X to delete a past search. In the Google App, you’ll also see a clock icon. To remove a prediction, long press on it in Android or swipe left on iOS to reveal a delete option.

You can also delete all your past searches in bulk, or by particular dates or those matching particular terms using My Activity in your Google Account.

More about autocomplete

We hope this post has helped you understand more about autocomplete, including how we’re working to reduce inappropriate predictions and to increase the usefulness of the feature. For more, you can also see our help page about autocomplete.You can also check out the recent Wired video interview below, where our our vice president of search Ben Gomes and the product manager of autocomplete Chris Haire answer questions about autocomplete that came from…autocomplete!