The era of the camera: Google Lens, one year in

THE CAMERA: IT’S NOT JUST FOR SELFIES & SUNSETS

My most recent camera roll runs the gamut from the sublime to the mundane:

There is, of course, the vacation beach pic, the kid’s winter recital, and the one--or ten--obligatory goofy selfie(s). But there’s also the book that caught my eye at a friend’s place, the screenshot of an insightful tweet and the tracking number on a package.

As our phones go everywhere with us, and storage becomes cheaper, we’re taking more photos of more types of things. We’re of course capturing sunsets and selfies, but people say 10 to 15 percent of the pictures being taken are of practical things like receipts and shopping lists.

To me, using our cameras to help us with our day-to-day activities makes sense at a fundamental human level. We are visual beings—by some estimates, 30 percent of the neurons in the cortex of our brain are for vision. Every waking moment, we rely on our vision to make sense of our surroundings, remember all sorts of information, and explore the world around us.

The way we use our cameras is not the only thing that’s changing: the tech behind our cameras is evolving too. As hardware, software, and AI continue to advance, I believe the camera will go well beyond taking photos—it will help you search what you see, browse the world around you, and get things done.

That’s why we started Google Lens last year as a first step in this journey. Last week, we launched a redesigned Lens experience across Android and iOS, and brought it to iOS users via the Google app.

I’ve spent the last decade leading teams that build products which use AI to help people in their daily lives, through Search, Assistant and now Google Lens. I see the camera opening up a whole new set of opportunities for information discovery and assistance. Here are just a few that we’re addressing with Lens:

GOOGLE LENS: SEARCH WHAT YOU SEE

Some things are really hard to describe with words. How would you describe the dog below if you wanted to know its breed? My son suggested, “Super cute, tan fur, with a white patch.” 🙄

With Lens, your camera can do the work for you, turning what you see into your search query.

Lens identifies this dog as a Shiba Inu.

So how does Lens turn the pixels in your camera stream into a card describing a Shiba Inu?

The answer, as you may have guessed, is machine learning and computer vision. But a machine learning algorithm is only as good as the data that it learns from. That’s why Lens leverages the hundreds of millions of queries in Image Search for “Shiba Inu” along with the thousands of images that are returned for each one to provide the basis for training its algorithms.

Google Images returns numerous results to a query for “Shiba Inu.”

Next, Lens uses TensorFlow—Google’s open source machine learning framework—to connect the dog images you see above to the words “Shiba Inu” and “dog.”

Finally, we connect those labels to Google's Knowledge Graph, with its tens of billions of facts on everything from pop stars to puppy breeds. This helps us understand that a Shiba Inu is a breed of dog.

Of course, Lens doesn’t always get it right:

Why does this happen? Oftentimes, what we see in our day-to-day lives looks fairly different than the images on the web used to train computer vision models. We point our cameras from different angles, at various locations, and under different types of lighting. And the subjects of these photos don’t always stay still. Neither do their photographers. This trips Lens up.

We’re starting to address this by training the algorithms with more pictures that look like they were taken with smartphone cameras.

This is just one of the many hard computer science problems we will need to solve. Just like with speech recognition, we’re starting small, but pushing on fundamental research and investing in richer training data.

TEACHING THE CAMERA TO READ

As we just saw, sometimes the things we're interested in are hard to put into words. But there are other times when words are precisely the thing we’re interested in. We want to look up a dish we see on a menu, save an inspirational quote we see written on a wall, or remember a phone number. What if you could easily copy and paste text like this from the real world to your phone?

To make this possible, we’ve given Lens the ability to read and let you take action with the words you see. For example, you can point your phone at a business card and add it to your contacts, or copy ingredients from a recipe and paste them into your shopping list.

Google Lens can copy and paste text from a recipe.

To teach Lens to read, we developed an optical character recognition (OCR) engine and combined that with our understanding of language from search and the Knowledge Graph. We train the machine learning algorithms using different characters, languages and fonts, drawing on sources like Google Books scans.

Sometimes, it’s hard to distinguish between similar looking characters like the letter “o” and zero. To do this, Lens uses language and spell-correction models from Google Search to better understand what a character or word most likely is—just like how Search knows to correct “bannana” to “banana,” Lens can guess “c00kie” is likely meant to be “cookie”—unless you're a l33t h4ck3r from the 90s, of course.

We use this OCR engine for other uses too like reading product labels. Lens can now identify more than one billion products—four times the number it covered at launch.

THE CAMERA AS A TOOL FOR CURIOSITY

When you’re looking to identify a cute puppy or save a recipe, you know what you want to search for or do. But sometimes we're not after answers or actions—we're looking for ideas, like shoes or jewelry in a certain style.

Now, style is even harder to put into words. That’s why we think the camera—a visual input—can be powerful here.

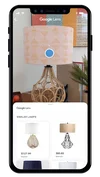

With the style search feature in Lens you can point your camera at outfits and home decor to get suggestions of items that are stylistically similar. So, for example, if you see a lamp you like at a friend’s place, Lens can show you similar designs, along with useful information like product reviews.

Lens offers style suggestions.

THE ERA OF THE CAMERA

A decade ago, I started at Google as a bright-eyed product manager enamored with the potential of visual search. But the tech simply wasn’t there. Fast forward to today and things are starting to change. Machine learning and computational photography techniques allow the Pixel 3 to capture great photos both day and night. Deep learning algorithms show promise in detecting signs of diabetic retinopathy from retinal photographs. Computer vision is now starting to let our devices understand the world and the things in it far more accurately.

Looking ahead, I believe that we are entering a new phase of computing: an era of the camera, if you will. It’s all coming together at once—a breathtaking pace of progress in AI and machine learning; cheaper and more powerful hardware thanks to the scale of mobile phones; and billions of people using their cameras to bookmark life’s moments, big and small.

As computers start to see like we do, the camera will become a powerful and intuitive interface to the world around us; an AI viewfinder that puts the answers right where the questions are—overlaying directions right on the streets we’re walking down, highlighting the products we’re looking for on store shelves, or instantly translating any word in front of us in a foreign city. We’ll be able to pay our bills, feed our parking meters, and learn more about virtually anything around us, simply by pointing the camera.

In short, the camera can give us all superhuman vision.